March 19, 2026

How to choose an AI translation tool: a practical decision guide

There is no single best AI translation tool. There is the best tool for your content type, your stakes, and your workflow — and those three factors point to different answers depending on who you are and what you are translating.

A freelance translator preparing a client deliverable has a different problem than a legal team translating a regulatory filing. A developer building a multilingual product has different requirements than a marketing team localizing a campaign. A student translating a research paper has different stakes than a compliance officer submitting documents to a government authority.

The "best of" lists don't resolve this. They rank tools against each other, but the question you actually need answered is: given what I'm translating, what are the consequences if the output is wrong, and what tool matches that situation?

This guide answers that question with a framework, not a ranking.

In this article

- The four questions that determine which tool you need

- Decision path: matching your situation to the right tool

- When a single AI model isn't enough

- Which tool fits which use case

- FAQs

The four questions that determine which tool you need

Before choosing a tool, answer these four questions about your specific translation task. Your answers do most of the work.

Question 1: What type of content is this?

Content type determines how much contextual intelligence the tool needs. A customer support message requires conversational naturalness. A legal contract requires terminological precision. A technical manual requires structural preservation. Marketing copy requires cultural resonance. Each of these is a different translation problem, and different tools solve them better.

Question 2: What happens if this translation is wrong?

This is the stakes question, and it is the single most important factor in tool selection. For internal communications, a mistranslation is an inconvenience. For a client deliverable, it is a credibility problem. For a contract, it is a legal liability. For clinical documentation, it is a patient safety issue. The higher the stakes, the more the tool selection shifts toward verification — not just translation.

Question 3: How much volume are you handling?

Volume affects which tool is practical. For occasional, short translations, a free web interface is sufficient. For documents, teams, or ongoing workflows, you need a tool with document support, batch capability, or API access. Volume also affects cost — some tools are priced per character, others per document, others by subscription.

Question 4: Does your data need to stay within a controlled environment?

For healthcare, legal, and regulated industries, where data goes matters as much as what it produces. Some tools send data to cloud servers outside your control. Others can be self-hosted. MachineTranslation.com's platform handles data processing under defined terms, relevant if you're translating confidential client content or regulated documents.

Decision path: matching your situation to the right tool

Run through the branches below based on your answers to the four questions above.

Are the stakes low? (Internal comms, personal use, quick reference)

For content where a mistranslation is an inconvenience rather than a liability (internal memos, personal correspondence, quick reference, travel), any mainstream tool delivers sufficient quality at minimal cost.

Google Translate covers 249 languages, requires no setup, and handles the broadest range of language pairs. The December 2025 Gemini upgrade improved handling of idioms and conversational content. For personal and informational use, it remains the highest-coverage free option.

DeepL produces more natural-sounding output for European language pairs and is worth using when fluency matters more than breadth — polished internal documents, European market communications, informal but professional correspondence.

The practical rule: for low-stakes content, pick the tool that covers your language pair and move on. The difference between tools matters far less here than it does at higher stakes.

Are the stakes moderate? (Client-facing, published, professional)

For content that leaves your organization and carries your name (client communications, published blog posts, product descriptions, proposals), the standard shifts. You need output that is accurate enough to send without second-guessing.

This is where a tool's quality signal matters. Most single-model tools give you one output with no indication of how reliable it is. If you do not speak the target language, you have no way to assess whether the output is right.

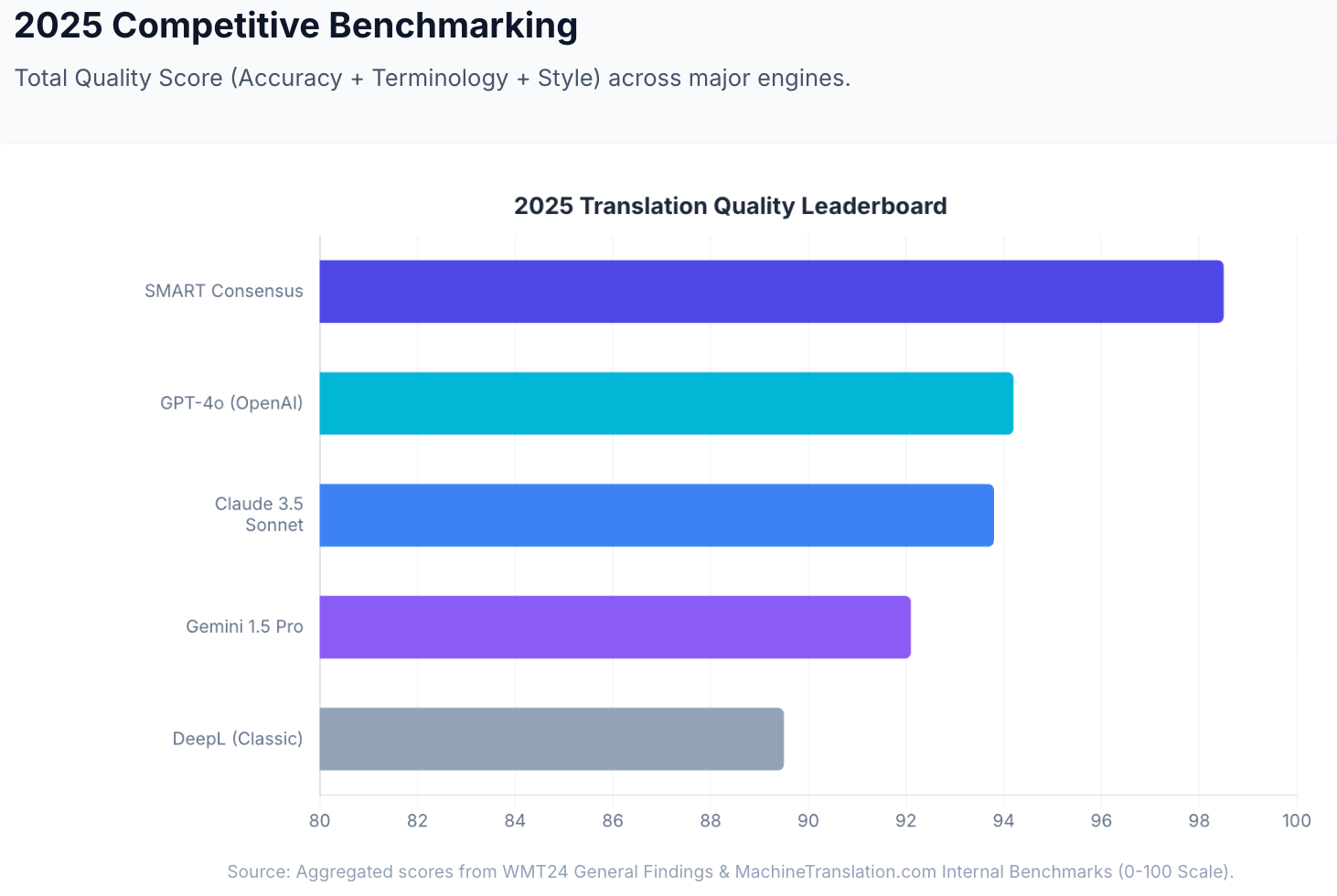

ChatGPT / Claude / Gemini (the major LLMs) produce strong contextual output for this tier, particularly for content where sounding natural in the target language is important. They handle idiomatic expression well, follow register instructions when given explicit prompting, and produce output that professional translators have rated favorably in blind studies. Claude 3.5 ranked first in 9 of 11 language pairs at WMT24; GPT-4o scores 94.2/100 in MachineTranslation.com's internal benchmarks. Source: MachineTranslation.com internal benchmarks and WMT24 General Machine Translation Findings.

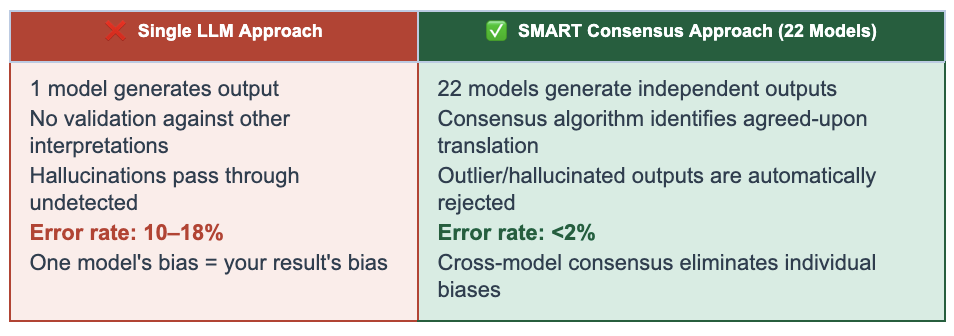

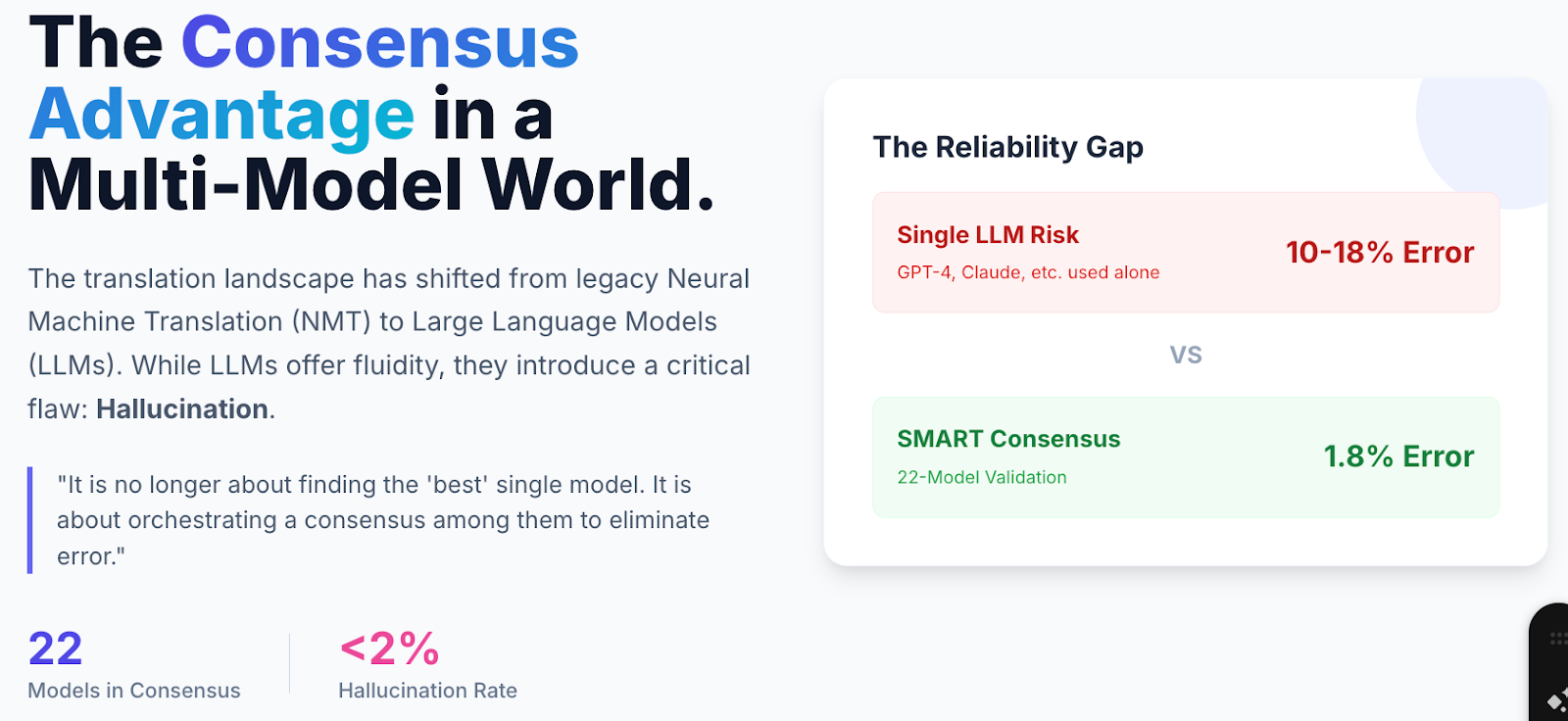

The limitation: even a strong single-model output gives you one answer with no cross-check. For moderate-stakes content being sent to clients or published publicly, the practical approach is to use MachineTranslation.com's SMART system — which runs 22 models simultaneously including ChatGPT, Claude, Gemini, DeepL, and Google, selects the output the majority agrees on. Internal data shows users who switched to SMART spent 27% less time verifying outputs compared to single-engine use. Source: MachineTranslation.com internal data.

At this tier, even MachineTranslation.com's free plan (no sign-up required) gives you consensus-verified output for the same cost as using a single tool alone.

Are the stakes high? (Legal, medical, regulated, compliance)

For content where a wrong translation creates professional liability or poses safety risks (contracts, court filings, clinical documentation, regulatory submissions, compliance reports), the tool selection question is different in kind, not just degree.

At this level, the requirement is not just quality. It is accountability. Someone qualified needs to have reviewed the output and be responsible for its accuracy.

MachineTranslation.com's two-layer approach addresses this directly. SMART's 22-model consensus reduces critical translation errors to under 2%, catching the model-specific hallucinations and terminological errors that any individual model can produce. Internal benchmarks show SMART reaching 98.5/100 on translation quality versus 94.2 for the best individual models. But for content where the standard is a qualified professional's sign-off, Human Verification escalates any translation to a certified human reviewer within the same platform: no external agency, no vendor change, no separate contract — 100% accuracy guaranteed. Source: MachineTranslation.com internal benchmarks.

For legal professionals specifically: the workflow is AI consensus first (SMART), then Human Verification for the final document or flagged passages. This gives you the efficiency of AI with the accountability that regulated content requires.

For medical and clinical content: the same two-layer approach applies. SMART flags where 22 models agree (precisely the passages most likely to contain terminological uncertainty) allowing reviewers to focus attention where it's needed rather than reviewing every line.

No single AI tool at any quality tier can provide this accountability layer. It requires human expertise in the loop.

Are you a developer or building a product?

If you are integrating translation into a software product, the selection criteria shift again. You need API access, reliability, and a response that tells you something about output quality — not just the translated text.

Google Translate API / DeepL API / OpenAI API are the standard paths. They are well-documented and integrate easily into most stacks. The trade-off is that each returns a single translation with no quality signal.

MachineTranslation.com's API returns the consensus output of 22 models too, a confidence signal based on how strongly the models agreed. For products where translation quality directly affects user trust (legal platforms, medical tools, compliance workflows, professional services), the difference between a single-model API response and a consensus-verified one is structurally significant.

When a single AI model isn't enough

Every single AI model (regardless of how strong it is) produces errors it cannot detect in its own output. This is not a quality problem that better models will eventually solve. It is a structural property of how individual models work: they generate the most probable output given their training, but they have no mechanism to catch the specific errors that their own probability distribution produces.

In MachineTranslation.com's internal benchmarks, individual top-tier models score between 93–94/100 on translation quality. SMART (which runs 22 models simultaneously and identifies the output the majority agrees on) reaches 98.5. The gap between 94 and 98.5 represents the model-specific errors that individual models produce and cannot self-correct. Source: MachineTranslation.com internal benchmarks and WMT24 General Machine Translation Findings.

In practical terms: if you are translating content where you cannot afford to be wrong, and you do not speak the target language well enough to catch errors yourself, a single-model tool is not a sufficient safeguard. This is true of Google Translate, DeepL, ChatGPT, Gemini, and Claude equally — each is capable, each is a single model, and none of them can cross-check their own output.

The internal MachineTranslation.com data is specific: 34% of users admitted they were not confident enough in a single AI output to publish it without checking. Among non-linguists, 46% said they spent more time manually comparing outputs across multiple tools than the AI itself saved them. SMART addresses this by doing the comparison automatically — 22 models, one AI-verified translation. Source: MachineTranslation.com internal data.

Which tool fits which use case

| Use case | Recommended tool | Why |

|---|---|---|

| Personal / travel / quick reference | Google Translate | 249 languages, free, no setup |

| European language pairs, polished output | DeepL | Lowest post-edit burden for EU pairs |

| Marketing, creative, tone-sensitive content | ChatGPT or Claude | Contextual depth, iterative refinement |

| General professional / client-facing content | MachineTranslation.com | Consensus output |

| Technical documentation / large files | MachineTranslation.com (document upload) | original layout preserved |

| Legal / compliance / regulated content | MachineTranslation.com (SMART + Human Verification) | 22-model consensus + qualified human sign-off in-platform |

| Developer / API integration | MachineTranslation.com API or Google/DeepL API | Consensus output + quality score vs. single-model API |

| Enterprise Microsoft environment | Microsoft Translator | Native Microsoft 365 integration, data residency |

The short version: for personal and low-stakes use, the free tools are sufficient and Google Translate's language breadth is hard to beat. For anything professional (especially anything client-facing or published), the question is how confident you need to be in the output before you send it. MachineTranslation.com is free to use and takes three seconds to run. It tells you immediately whether the 22 models agreed strongly or flagged uncertainty. For content where that uncertainty matters, Human Verification is available within the same platform.

Start free at MachineTranslation.com, no sign-up required.

FAQs

1. How do I choose the right AI translation tool?

Start with two questions: what type of content are you translating, and what are the consequences if it is wrong? For casual, personal, or internal content, any mainstream free tool is sufficient. For professional, client-facing, or regulated content, you need a tool that provides a quality signal — not just a translation. MachineTranslation.com's SMART consensus runs 22 models and returns an AI-verified translation showing how confident the result is, free with no sign-up.

2. Is Google Translate good enough for professional use?

For informational and general content, yes — Google Translate's Gemini-upgraded quality and 249-language coverage make it a strong free option. For client deliverables, legal documents, or regulated content, a single-model output without a quality signal is not sufficient safeguard. Professional use requires either cross-model consensus or human verification of the output.

3. What is the best AI translation tool for legal documents?

For legal documents, the appropriate workflow is consensus-based AI translation first (to catch model-specific errors), followed by Human Verification from a qualified professional for final accuracy. MachineTranslation.com provides both within the same platform, 22-model SMART consensus and in-platform Human Verification with a 100% accuracy guarantee. No external agency or separate vendor is required.

4. What should I use for translating technical documents?

Technical translation requires two things: terminological consistency across the full document, and structural preservation (formatting, tags, layout). For large files, MachineTranslation.com supports large files across PDF, DOCX, TXT, CSV, XLSX, and image formats, with original layout preserved. The consensus across 22 models applies terminology consistency checks that individual models cannot self-enforce. For critical technical content, Human Verification provides a final qualified review.

5. What is the difference between using ChatGPT and MachineTranslation.com for translation?

ChatGPT is one of the 22 models inside MachineTranslation.com's system. When you use MachineTranslation.com, you receive the output that ChatGPT and 21 other models (including Claude, Gemini, DeepL, and Google) agree on, along with its individual outputs side-by-side. Using ChatGPT directly gives you one output with no cross-check. The consensus approach catches errors that are specific to ChatGPT's output without affecting the quality ChatGPT contributes to the agreed result.

7. When do I need human verification for a translation?

Human verification is appropriate when: (a) the content has legal, medical, or regulatory consequences; (b) you cannot assess the output quality yourself because you do not speak the target language; or (c) the content will be submitted to an authority or published under your organization's name. MachineTranslation.com's Human Verification provides a 100% accuracy guarantee from a certified professional reviewer, in-platform, with no external vendor required.